More python tips#

# import the pandas package

import pandas as pd

# import numpy

import numpy as np

# import the package we'll use for plotting

import matplotlib.pyplot as plt

# this tells the jupyter notebook to show plots "inline" with other output here in the notebook

%matplotlib inline

Opening data files (with pandas):#

# Location of the data file

Skykomish_data_file = 'Skykomish_peak_flow_12134500_skykomish_river_near_gold_bar.xlsx'

# Use pandas.read_excel() function to open this file.

Skykomish_data = pd.read_excel(Skykomish_data_file)

/opt/hostedtoolcache/Python/3.11.14/x64/lib/python3.11/site-packages/openpyxl/worksheet/_reader.py:329: UserWarning: Unknown extension is not supported and will be removed

warn(msg)

# Now we can see the dataset we loaded:

Skykomish_data

| date of peak | water year | peak value (cfs) | gage_ht (feet) | |

|---|---|---|---|---|

| 0 | 1928-10-09 | 1929 | 18800 | 10.55 |

| 1 | 1930-02-05 | 1930 | 15800 | 10.44 |

| 2 | 1931-01-28 | 1931 | 35100 | 14.08 |

| 3 | 1932-02-26 | 1932 | 83300 | 20.70 |

| 4 | 1932-11-13 | 1933 | 72500 | 19.50 |

| ... | ... | ... | ... | ... |

| 86 | 2015-11-17 | 2016 | 95900 | 21.73 |

| 87 | 2016-10-20 | 2017 | 41000 | 15.76 |

| 88 | 2017-11-23 | 2018 | 54200 | 17.50 |

| 89 | 2018-11-02 | 2019 | 35200 | 14.98 |

| 90 | 2020-02-01 | 2020 | 72200 | 19.50 |

91 rows × 4 columns

# Look at a single column, using the header name

Skykomish_data['water year']

0 1929

1 1930

2 1931

3 1932

4 1933

...

86 2016

87 2017

88 2018

89 2019

90 2020

Name: water year, Length: 91, dtype: int64

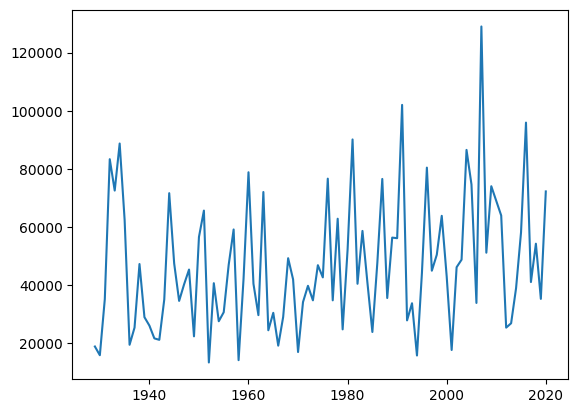

# Plot data, specifying our x and y with column header names

plt.plot(Skykomish_data['water year'],Skykomish_data['peak value (cfs)'])

[<matplotlib.lines.Line2D at 0x7fbe17cfd6d0>]

How to find help/documentation?#

help(np.mean)

Help on _ArrayFunctionDispatcher in module numpy:

mean(a, axis=None, dtype=None, out=None, keepdims=<no value>, *, where=<no value>)

Compute the arithmetic mean along the specified axis.

Returns the average of the array elements. The average is taken over

the flattened array by default, otherwise over the specified axis.

`float64` intermediate and return values are used for integer inputs.

Parameters

----------

a : array_like

Array containing numbers whose mean is desired. If `a` is not an

array, a conversion is attempted.

axis : None or int or tuple of ints, optional

Axis or axes along which the means are computed. The default is to

compute the mean of the flattened array.

If this is a tuple of ints, a mean is performed over multiple axes,

instead of a single axis or all the axes as before.

dtype : data-type, optional

Type to use in computing the mean. For integer inputs, the default

is `float64`; for floating point inputs, it is the same as the

input dtype.

out : ndarray, optional

Alternate output array in which to place the result. The default

is ``None``; if provided, it must have the same shape as the

expected output, but the type will be cast if necessary.

See :ref:`ufuncs-output-type` for more details.

See :ref:`ufuncs-output-type` for more details.

keepdims : bool, optional

If this is set to True, the axes which are reduced are left

in the result as dimensions with size one. With this option,

the result will broadcast correctly against the input array.

If the default value is passed, then `keepdims` will not be

passed through to the `mean` method of sub-classes of

`ndarray`, however any non-default value will be. If the

sub-class' method does not implement `keepdims` any

exceptions will be raised.

where : array_like of bool, optional

Elements to include in the mean. See `~numpy.ufunc.reduce` for details.

.. versionadded:: 1.20.0

Returns

-------

m : ndarray, see dtype parameter above

If `out=None`, returns a new array containing the mean values,

otherwise a reference to the output array is returned.

See Also

--------

average : Weighted average

std, var, nanmean, nanstd, nanvar

Notes

-----

The arithmetic mean is the sum of the elements along the axis divided

by the number of elements.

Note that for floating-point input, the mean is computed using the

same precision the input has. Depending on the input data, this can

cause the results to be inaccurate, especially for `float32` (see

example below). Specifying a higher-precision accumulator using the

`dtype` keyword can alleviate this issue.

By default, `float16` results are computed using `float32` intermediates

for extra precision.

Examples

--------

>>> import numpy as np

>>> a = np.array([[1, 2], [3, 4]])

>>> np.mean(a)

2.5

>>> np.mean(a, axis=0)

array([2., 3.])

>>> np.mean(a, axis=1)

array([1.5, 3.5])

In single precision, `mean` can be inaccurate:

>>> a = np.zeros((2, 512*512), dtype=np.float32)

>>> a[0, :] = 1.0

>>> a[1, :] = 0.1

>>> np.mean(a)

np.float32(0.54999924)

Computing the mean in float64 is more accurate:

>>> np.mean(a, dtype=np.float64)

0.55000000074505806 # may vary

Computing the mean in timedelta64 is available:

>>> b = np.array([1, 3], dtype="timedelta64[D]")

>>> np.mean(b)

np.timedelta64(2,'D')

Specifying a where argument:

>>> a = np.array([[5, 9, 13], [14, 10, 12], [11, 15, 19]])

>>> np.mean(a)

12.0

>>> np.mean(a, where=[[True], [False], [False]])

9.0

np.mean?

help(pd.read_excel)

Help on function read_excel in module pandas:

read_excel(io, sheet_name: 'str | int | list[IntStrT] | None' = 0, *, header: 'int | Sequence[int] | None' = 0, names: 'SequenceNotStr[Hashable] | range | None' = None, index_col: 'int | str | Sequence[int] | None' = None, usecols: 'int | str | Sequence[int] | Sequence[str] | Callable[[HashableT], bool] | None' = None, dtype: 'DtypeArg | None' = None, engine: "Literal['xlrd', 'openpyxl', 'odf', 'pyxlsb', 'calamine'] | None" = None, converters: 'dict[str, Callable] | dict[int, Callable] | None' = None, true_values: 'Iterable[Hashable] | None' = None, false_values: 'Iterable[Hashable] | None' = None, skiprows: 'Sequence[int] | int | Callable[[int], object] | None' = None, nrows: 'int | None' = None, na_values=None, keep_default_na: 'bool' = True, na_filter: 'bool' = True, verbose: 'bool' = False, parse_dates: 'list | dict | bool' = False, date_format: 'dict[Hashable, str] | str | None' = None, thousands: 'str | None' = None, decimal: 'str' = '.', comment: 'str | None' = None, skipfooter: 'int' = 0, storage_options: 'StorageOptions | None' = None, dtype_backend: 'DtypeBackend | lib.NoDefault' = <no_default>, engine_kwargs: 'dict | None' = None) -> 'DataFrame | dict[IntStrT, DataFrame]'

Read an Excel file into a ``DataFrame``.

Supports `xls`, `xlsx`, `xlsm`, `xlsb`, `odf`, `ods` and `odt` file extensions

read from a local filesystem or URL. Supports an option to read

a single sheet or a list of sheets.

Parameters

----------

io : str, ExcelFile, xlrd.Book, path object, or file-like object

Any valid string path is acceptable. The string could be a URL. Valid

URL schemes include http, ftp, s3, and file. For file URLs, a host is

expected. A local file could be: ``file://localhost/path/to/table.xlsx``.

If you want to pass in a path object, pandas accepts any ``os.PathLike``.

By file-like object, we refer to objects with a ``read()`` method,

such as a file handle (e.g. via builtin ``open`` function)

or ``StringIO``.

sheet_name : str, int, list, or None, default 0

Strings are used for sheet names. Integers are used in zero-indexed

sheet positions (chart sheets do not count as a sheet position).

Lists of strings/integers are used to request multiple sheets.

When ``None``, will return a dictionary containing DataFrames for each sheet.

Available cases:

* Defaults to ``0``: 1st sheet as a `DataFrame`

* ``1``: 2nd sheet as a `DataFrame`

* ``"Sheet1"``: Load sheet with name "Sheet1"

* ``[0, 1, "Sheet5"]``: Load first, second and sheet named "Sheet5"

as a dict of `DataFrame`

* ``None``: Returns a dictionary containing DataFrames for each sheet.

header : int, list of int, default 0

Row (0-indexed) to use for the column labels of the parsed

DataFrame. If a list of integers is passed those row positions will

be combined into a ``MultiIndex``. Use None if there is no header.

names : array-like, default None

List of column names to use. If file contains no header row,

then you should explicitly pass header=None.

index_col : int, str, list of int, default None

Column (0-indexed) to use as the row labels of the DataFrame.

Pass None if there is no such column. If a list is passed,

those columns will be combined into a ``MultiIndex``. If a

subset of data is selected with ``usecols``, index_col

is based on the subset.

Missing values will be forward filled to allow roundtripping with

``to_excel`` for ``merged_cells=True``. To avoid forward filling the

missing values use ``set_index`` after reading the data instead of

``index_col``.

usecols : str, list-like, or callable, default None

* If None, then parse all columns.

* If str, then indicates comma separated list of Excel column letters

and column ranges (e.g. "A:E" or "A,C,E:F"). Ranges are inclusive of

both sides.

* If list of int, then indicates list of column numbers to be parsed

(0-indexed).

* If list of string, then indicates list of column names to be parsed.

* If callable, then evaluate each column name against it and parse the

column if the callable returns ``True``.

Returns a subset of the columns according to behavior above.

dtype : Type name or dict of column -> type, default None

Data type for data or columns. E.g. {'a': np.float64, 'b': np.int32}

Use ``object`` to preserve data as stored in Excel and not interpret dtype,

which will necessarily result in ``object`` dtype.

If converters are specified, they will be applied INSTEAD

of dtype conversion.

If you use ``None``, it will infer the dtype of each column based on the data.

engine : {'openpyxl', 'calamine', 'odf', 'pyxlsb', 'xlrd'}, default None

If io is not a buffer or path, this must be set to identify io.

Engine compatibility :

- ``openpyxl`` supports newer Excel file formats.

- ``calamine`` supports Excel (.xls, .xlsx, .xlsm, .xlsb)

and OpenDocument (.ods) file formats.

- ``odf`` supports OpenDocument file formats (.odf, .ods, .odt).

- ``pyxlsb`` supports Binary Excel files.

- ``xlrd`` supports old-style Excel files (.xls).

When ``engine=None``, the following logic will be used to determine the engine:

- If ``path_or_buffer`` is an OpenDocument format (.odf, .ods, .odt),

then `odf <https://pypi.org/project/odfpy/>`_ will be used.

- Otherwise if ``path_or_buffer`` is an xls format, ``xlrd`` will be used.

- Otherwise if ``path_or_buffer`` is in xlsb format, ``pyxlsb`` will be used.

- Otherwise ``openpyxl`` will be used.

converters : dict, default None

Dict of functions for converting values in certain columns. Keys can

either be integers or column labels, values are functions that take one

input argument, the Excel cell content, and return the transformed

content.

true_values : list, default None

Values to consider as True.

false_values : list, default None

Values to consider as False.

skiprows : list-like, int, or callable, optional

Line numbers to skip (0-indexed) or number of lines to skip (int) at the

start of the file. If callable, the callable function will be evaluated

against the row indices, returning True if the row should be skipped and

False otherwise. An example of a valid callable argument would be ``lambda

x: x in [0, 2]``.

nrows : int, default None

Number of rows to parse. Does not include header rows.

na_values : scalar, str, list-like, or dict, default None

Additional strings to recognize as NA/NaN. If dict passed, specific

per-column NA values. By default the following values are interpreted

as NaN: '', '#N/A', '#N/A N/A', '#NA', '-1.#IND', '-1.#QNAN', '-NaN', '-nan',

'1.#IND', '1.#QNAN', '<NA>', 'N/A', 'NA', 'NULL', 'NaN', 'None',

'n/a', 'nan', 'null'.

keep_default_na : bool, default True

Whether or not to include the default NaN values when parsing the data.

Depending on whether ``na_values`` is passed in, the behavior is as follows:

* If ``keep_default_na`` is True, and ``na_values`` are specified,

``na_values`` is appended to the default NaN values used for parsing.

* If ``keep_default_na`` is True, and ``na_values`` are not specified, only

the default NaN values are used for parsing.

* If ``keep_default_na`` is False, and ``na_values`` are specified, only

the NaN values specified ``na_values`` are used for parsing.

* If ``keep_default_na`` is False, and ``na_values`` are not specified, no

strings will be parsed as NaN.

Note that if `na_filter` is passed in as False, the ``keep_default_na`` and

``na_values`` parameters will be ignored.

na_filter : bool, default True

Detect missing value markers (empty strings and the value of na_values). In

data without any NAs, passing ``na_filter=False`` can improve the

performance of reading a large file.

verbose : bool, default False

Indicate number of NA values placed in non-numeric columns.

parse_dates : bool, list-like, or dict, default False

The behavior is as follows:

* ``bool``. If True -> try parsing the index.

* ``list`` of int or names. e.g. If [1, 2, 3] -> try parsing columns 1, 2, 3

each as a separate date column.

* ``list`` of lists. e.g. If [[1, 3]] -> combine columns 1 and 3 and parse as

a single date column.

* ``dict``, e.g. {'foo' : [1, 3]} -> parse columns 1, 3 as date and call

result 'foo'

If a column or index contains an unparsable date, the entire column or

index will be returned unaltered as an object data type. If you don`t want to

parse some cells as date just change their type in Excel to "Text".

For non-standard datetime parsing, use ``pd.to_datetime`` after

``pd.read_excel``.

Note: A fast-path exists for iso8601-formatted dates.

date_format : str or dict of column -> format, default ``None``

If used in conjunction with ``parse_dates``, will parse dates according to this

format. For anything more complex,

please read in as ``object`` and then apply :func:`to_datetime` as-needed.

.. versionadded:: 2.0.0

thousands : str, default None

Thousands separator for parsing string columns to numeric. Note that

this parameter is only necessary for columns stored as TEXT in Excel,

any numeric columns will automatically be parsed, regardless of display

format.

decimal : str, default '.'

Character to recognize as decimal point for parsing string columns to numeric.

Note that this parameter is only necessary for columns stored as TEXT in Excel,

any numeric columns will automatically be parsed, regardless of display

format.(e.g. use ',' for European data).

comment : str, default None

Comments out remainder of line. Pass a character or characters to this

argument to indicate comments in the input file. Any data between the

comment string and the end of the current line is ignored.

skipfooter : int, default 0

Rows at the end to skip (0-indexed).

storage_options : dict, optional

Extra options that make sense for a particular storage connection, e.g.

host, port, username, password, etc. For HTTP(S) URLs the key-value pairs

are forwarded to ``urllib.request.Request`` as header options. For other

URLs (e.g. starting with "s3://", and "gcs://") the key-value pairs are

forwarded to ``fsspec.open``. Please see ``fsspec`` and ``urllib`` for more

details, and for more examples on storage options refer `here

<https://pandas.pydata.org/docs/user_guide/io.html?

highlight=storage_options#reading-writing-remote-files>`_.

dtype_backend : {'numpy_nullable', 'pyarrow'}

Back-end data type applied to the resultant :class:`DataFrame`

(still experimental). If not specified, the default behavior

is to not use nullable data types. If specified, the behavior

is as follows:

* ``"numpy_nullable"``: returns nullable-dtype-backed :class:`DataFrame`

* ``"pyarrow"``: returns pyarrow-backed nullable

:class:`ArrowDtype` :class:`DataFrame`

.. versionadded:: 2.0

engine_kwargs : dict, optional

Arbitrary keyword arguments passed to excel engine.

Returns

-------

DataFrame or dict of DataFrames

DataFrame from the passed in Excel file. See notes in sheet_name

argument for more information on when a dict of DataFrames is returned.

See Also

--------

DataFrame.to_excel : Write DataFrame to an Excel file.

DataFrame.to_csv : Write DataFrame to a comma-separated values (csv) file.

read_csv : Read a comma-separated values (csv) file into DataFrame.

read_fwf : Read a table of fixed-width formatted lines into DataFrame.

Notes

-----

For specific information on the methods used for each Excel engine, refer to the

pandas

:ref:`user guide <io.excel_reader>`

Examples

--------

The file can be read using the file name as string or an open file object:

>>> pd.read_excel("tmp.xlsx", index_col=0) # doctest: +SKIP

Name Value

0 string1 1

1 string2 2

2 #Comment 3

>>> pd.read_excel(open("tmp.xlsx", "rb"), sheet_name="Sheet3") # doctest: +SKIP

Unnamed: 0 Name Value

0 0 string1 1

1 1 string2 2

2 2 #Comment 3

Index and header can be specified via the `index_col` and `header` arguments

>>> pd.read_excel("tmp.xlsx", index_col=None, header=None) # doctest: +SKIP

0 1 2

0 NaN Name Value

1 0.0 string1 1

2 1.0 string2 2

3 2.0 #Comment 3

Column types are inferred but can be explicitly specified

>>> pd.read_excel(

... "tmp.xlsx", index_col=0, dtype={"Name": str, "Value": float}

... ) # doctest: +SKIP

Name Value

0 string1 1.0

1 string2 2.0

2 #Comment 3.0

True, False, and NA values, and thousands separators have defaults,

but can be explicitly specified, too. Supply the values you would like

as strings or lists of strings!

>>> pd.read_excel(

... "tmp.xlsx", index_col=0, na_values=["string1", "string2"]

... ) # doctest: +SKIP

Name Value

0 NaN 1

1 NaN 2

2 #Comment 3

Comment lines in the excel input file can be skipped using the

``comment`` kwarg.

>>> pd.read_excel("tmp.xlsx", index_col=0, comment="#") # doctest: +SKIP

Name Value

0 string1 1.0

1 string2 2.0

2 None NaN

pd.read_excel?

Writing functions:#

def add_values(x,y):

z = x + y

return z

result = add_values(1,2)

print(result)

3

Import your custom functions:#

#def subtract_values(x,y):

# z = x - y

# return z

import my_functions

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Cell In[14], line 1

----> 1 import my_functions

ModuleNotFoundError: No module named 'my_functions'

result = my_functions.subtract_values(10,5)

print(result)